Private, Industrial IoT Data Acquisition Platform

From 2017–2019 I was the developer, maintainer, and operator of a data acquisition platform for industrial customers at Stack41. Unlike normal IoT offerings, we controlled the complete stack (our company was primarily an infrastructure-as-a-service vendor) and therefore could promise more security and reliability of our cloud. Nowhere in our service was proprietary software used, which let me work with tremendous speed to build dashboards for customers.

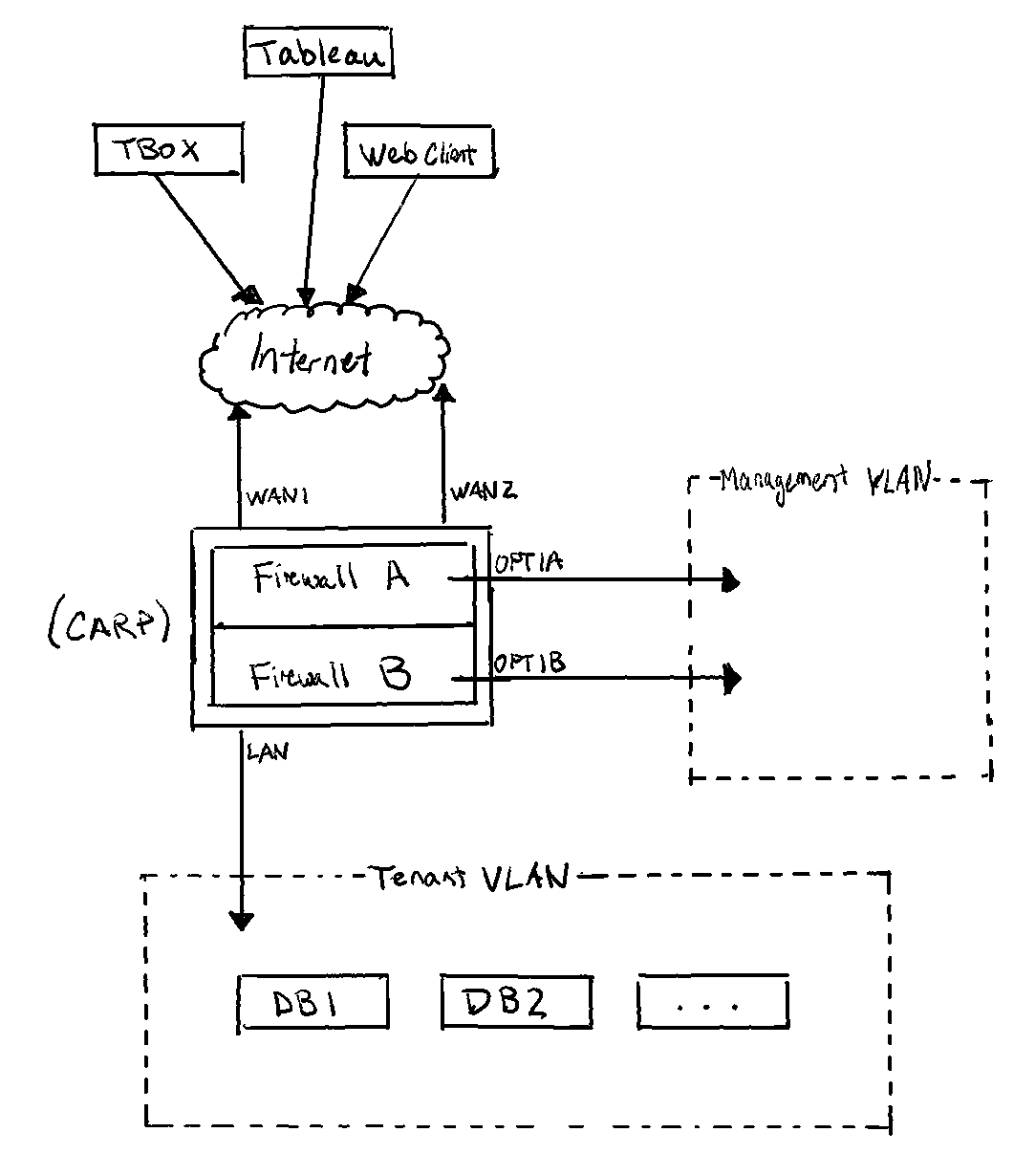

Each tenant, or customer, received their own L2 network in our data center, so they were completely isolated from each other. A static public IP was provided which routed to a redundant application stack.

The application stack, from the front, used a pair of pfSense servers configured as a CARP pair—if one went out, the other would pick up its IP. These provided a soft network for the tenant’s services (also redundantly configured): OpenVPN, BIND, PostgreSQL+TimescaleDB, and Grafana. If any of these services failed, another VM was there to take its place.

In a tenant’s network, name resolution, database, dashboarding, and reporting services are provided. User management is controlled and performed in a tenant’s router config. These services form the basis of the IoT offering: a redundant way to store (database), access (users and visualization), and use (tenant-specific VMs) their data. Our infrastructure hardware was located in a Tier 3+ data center which provides a redundant (power/cooling/armed guards) environment.

These VMs were configured with a tool I wrote called ShRx which acted like a lightweight, “Docker for VMs.” It allowed me to produce (and reproduce) VM disk images for specific tenants quickly. Software updates were applied as whole new boot disks; data and configurations resided on a separate disk that was untouched during updates.

To a tenant’s private cloud, a gateway device (I made) may connect to bridge with a customer’s industrial control processes over a VPN. Our device was an x86 Celeron computer with 8 GB memory, 256 GB SSD, 2-4G cellular, and ran a version of Alpine Linux. The OS was customized with other services I wrote (to maintain cellular and VPN connections and handle failures) and re-packaged so that it booted to RAM, which saves SSD wear and gives the best performance. Data loss rarely occurs. The box is always streaming data into a database in the tenant’s cloud. If a connection failure happens, the box begins buffering data locally and re-uploads it when service is restored.

I wrote the software for data collection, which was authored with a modular device pattern. Stack41 was able to add compatibility with new devices by flipping bits in the database. In most of our use, it used pylogx to interface with Allen-Bradley ControlLogix PLCs. However, I did add generic HTTP/CSV compatibility, and wrote a demonstration using it with drinking birds.

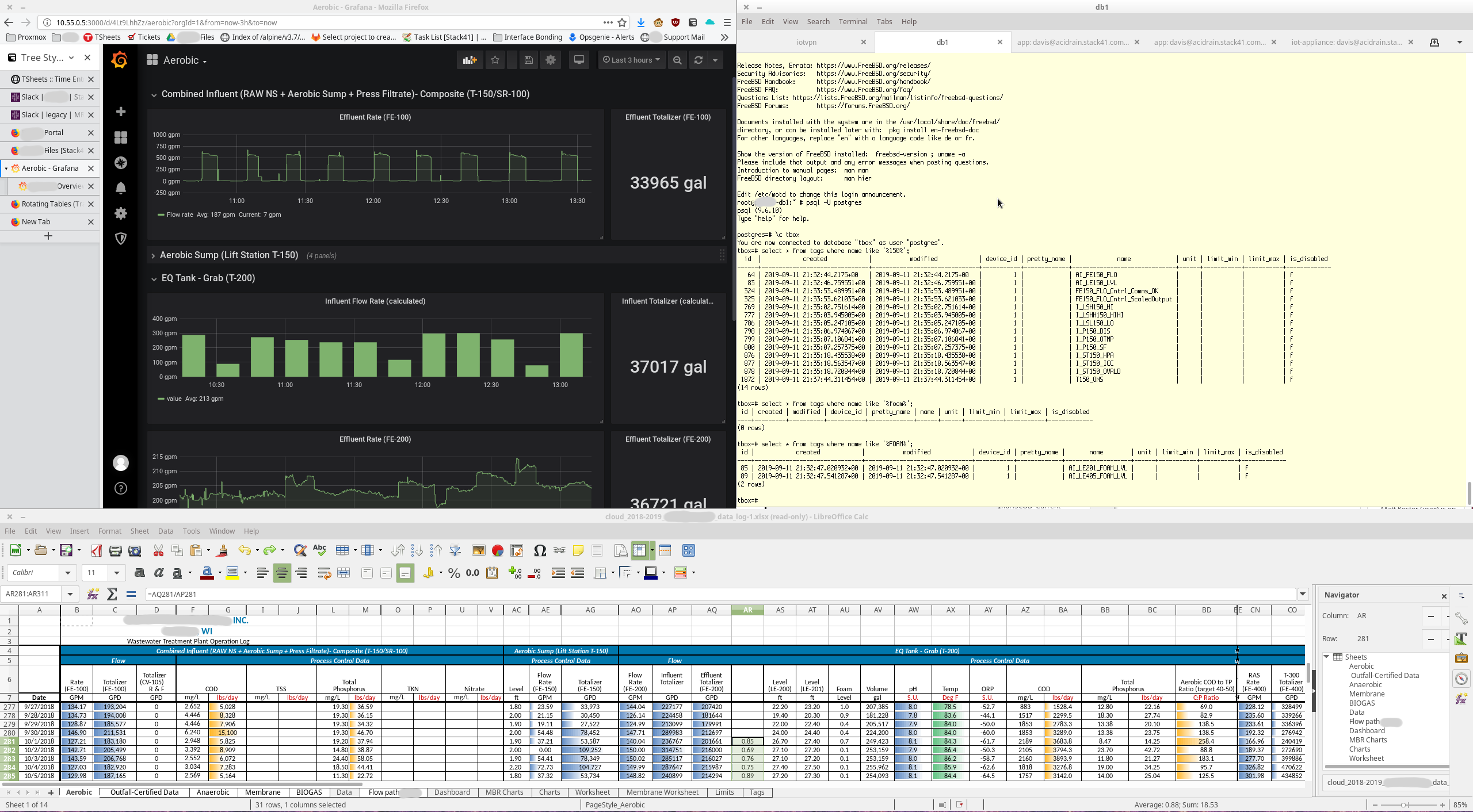

Example

Below is an example of a customer for whom I transcribed their industrial control (wastewater treatment) spreadsheet into a live dashboard. This includes writing many queries to figure the total volume processed, among other adjustments.